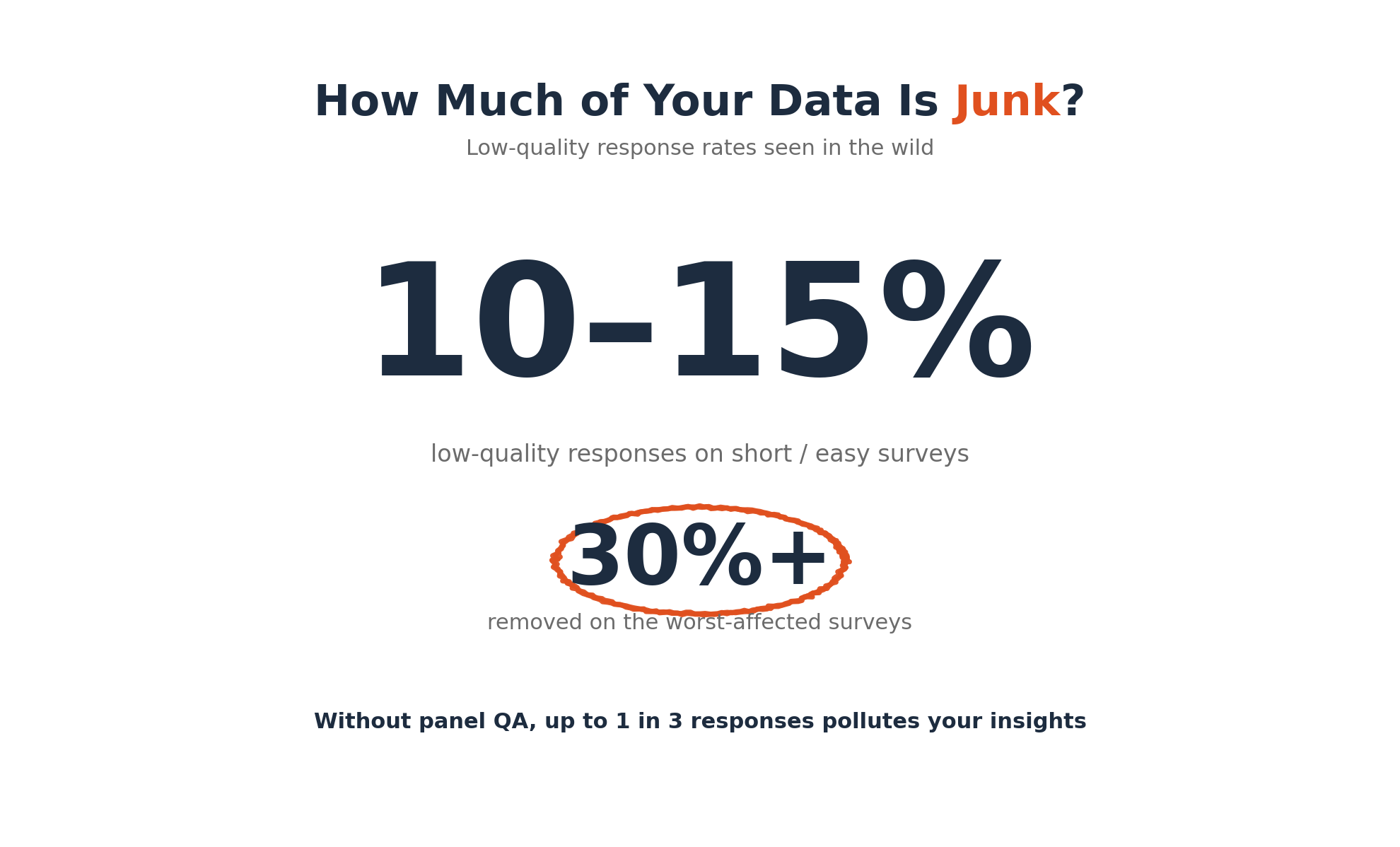

In market research, the quality of your data is everything. Without the right safeguards, you risk basing critical decisions on feedback from respondents who raced through your survey or provided meaningless answers. This is where panel quality checks, speeding, gibberish, and red flag checks come in. These are automated and manual procedures designed to identify and filter out low-quality survey responses from inattentive participants, bots, or fraudulent actors. The problem of low-quality data is bigger than many realize. One provider reported they consistently see 10-15% of respondents are low quality on short or easy surveys, with some surveys seeing over 30% of responses removed. These checks are therefore essential for protecting the integrity of your insights.

Modern research platforms like Yazi, which operates on WhatsApp to reach audiences in emerging markets, build these controls directly into their system. This helps teams capture reliable feedback at scale. This guide breaks down the key quality checks you need to know, explaining what they are, why they matter, and how they help you trust your survey results.

Spotting Inattentive Respondents

Some of the most common data quality issues come from human participants who are simply not paying close attention. They might be tired, bored, or just trying to get to the incentive at the end. These checks are designed to catch that behavior.

What is a Speeder Check?

A speeder check flags respondents who finish a questionnaire too quickly to have given thoughtful answers. These participants, known as “speeders”, often complete surveys in a fraction of the time an engaged person would take. A common rule is to flag anyone who finishes in less than one third of the median completion time. For example, if a survey takes a median of 15 minutes, anyone finishing in under 5 minutes would be flagged for review. Studies have found that speeders can make up anywhere from 5% to 20% of a sample, making this a crucial check for data reliability.

What is a Straightlining Check?

A straightlining check identifies participants who give the same answer to multiple questions in a row, particularly in grid or matrix questions. This behavior, also called non differentiation, suggests the respondent is not carefully reading each item. They might just select “Neutral” for every statement to finish faster. This can seriously distort your data by masking true opinions and creating a false sense of consensus. Modern survey tools automatically flag these patterns, allowing you to filter out responses that come from survey fatigue rather than genuine consideration.

What is an Attention Check?

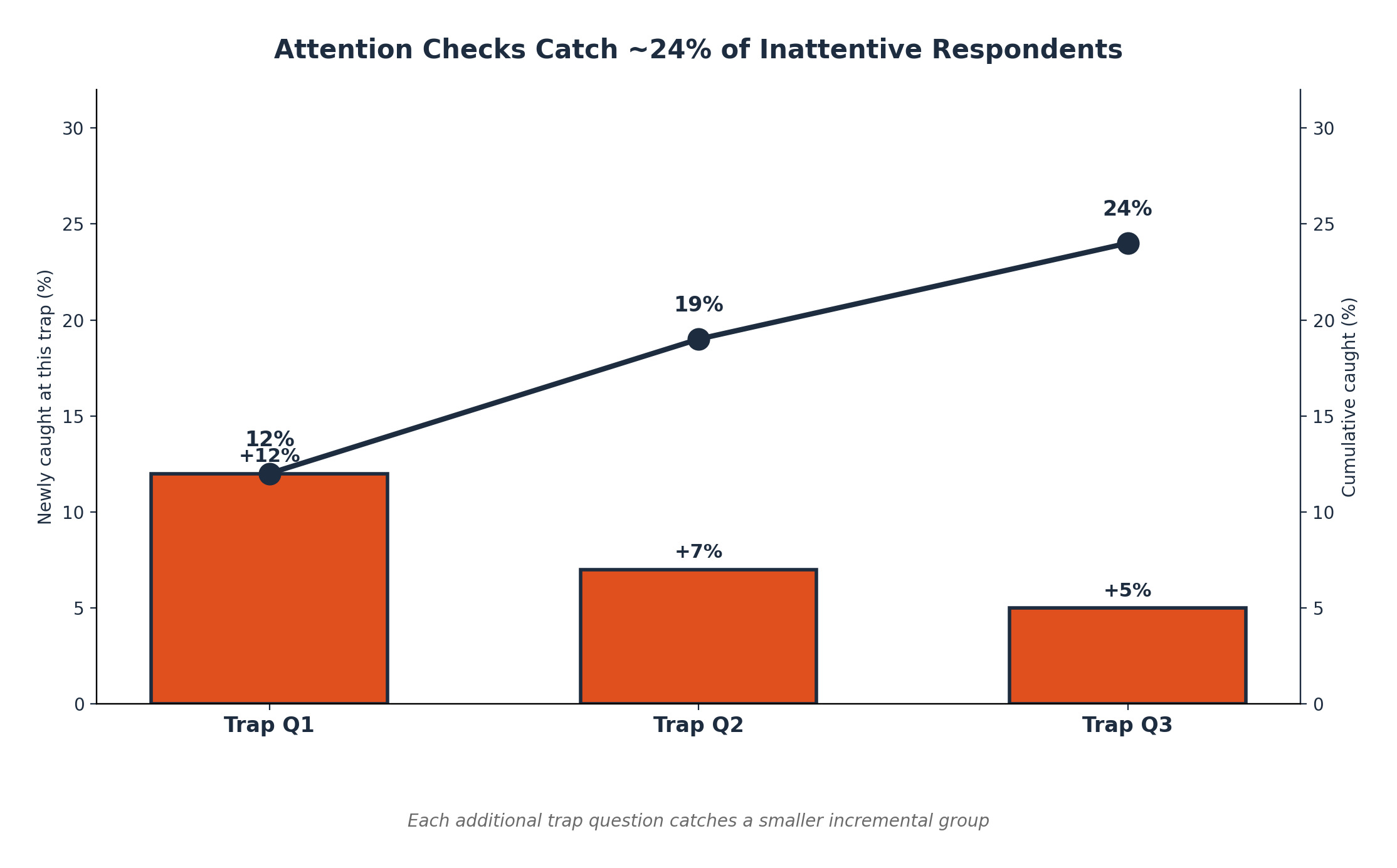

Attention checks, also known as trap questions, are designed to verify if a respondent is actively paying attention. This is usually a simple question with an obvious or instructed answer, like “Please select ‘Option C’ for this question”. Failing an attention check is a clear sign of an inattentive respondent. One study found that about 12% of participants failed the first trap question, with a second and third question catching an additional 7% and 5%.

The Limitations of Attention Checks

While useful, attention checks are not a perfect solution. Experienced survey takers can often spot and pass simple traps, meaning they may only catch the most careless respondents. Overusing them can also backfire, making participants feel watched or untrusted, which could alter their subsequent answers. Furthermore, some researchers warn that automatically removing everyone who fails an attention check can unintentionally skew your sample by excluding certain demographics, which threatens the survey’s overall validity. For this reason, attention checks are best used sparingly and combined with other quality control measures.

Verifying Your Panel: Bots, Fakes, and Duplicates

Beyond simple inattention, researchers must guard against fraudulent or invalid entries. These checks ensure that every response comes from a real, unique, and qualified individual.

What is Bot Detection?

Bot detection involves identifying and blocking automated programs from submitting survey responses. Online surveys are a common target for bots trying to claim incentives. To fight this, platforms use tools like CAPTCHAs and invisible fraud scores to screen out non human participants. While bots can sometimes bypass simple multiple choice questions, they often fail when it comes to open ended questions, submitting copied text or AI generated gibberish that doesn’t fit the context. These are key red flags that robust panel quality checks for speeding, gibberish, and other issues are designed to catch.

What is Duplicate Response Detection?

This process identifies when the same person submits a survey more than once. If one person’s opinions are counted multiple times, it can significantly skew the results. This is a surprisingly common issue, with one industry analysis estimating that 4% of unique devices attempted to take the survey more than once. To prevent this, platforms use IP address blocking and more advanced digital fingerprinting to identify and remove repeat entries, ensuring each participant is counted only once.

What is Impostor Screening?

Impostor screening is about verifying that participants are who they claim to be. In some studies, people may misrepresent themselves to qualify for a survey they wouldn’t otherwise be eligible for. To prevent this, researchers use several techniques:

This is especially critical in emerging markets. Platforms like Yazi address this by managing vetted audience panels across 13 African countries, using built in fraud checks and periodic panel recalibration to maintain a high quality, verified respondent pool. If you need to reach verified audiences in challenging markets, you can get in touch with Yazi to learn more.

Cleaning Up Qualitative Data

Open ended questions provide rich, nuanced insights, but they also open the door to low quality text answers. A specific suite of panel quality checks, speeding, gibberish, and red flag checks is needed to manage this.

Open Ended Response Quality Checks

This is a broad term for the methods used to evaluate free text answers. Instead of just accepting any text, these checks automatically analyze responses for several quality indicators. This includes looking at the length, relevance, and coherence of the text to determine if the participant provided a thoughtful answer or just typed junk to move on.

What is Gibberish Detection?

Gibberish detection automatically identifies nonsensical text like “asdfjkl” or a random mashing of keys in open ended responses. Because manually finding these junk answers in a large dataset is nearly impossible, platforms use AI and machine learning to scan text and flag responses that are not coherent language. This saves countless hours of cleaning and ensures your qualitative analysis is based on real feedback, not keyboard spam.

Spotting Copy Paste and Single Character Answers

Two other common red flags in open ended questions are copy pasted text and single character answers.

Both indicate a lack of effort and provide no value. Automated checks easily catch these behaviors, allowing you to filter out responses that are effectively empty.

What is an Off Topic Answer Flag?

This flag identifies answers that are completely unrelated to the question asked. For example, if a question asks, “What features do you wish your smartphone had?” and the response is, “I like pizza,” the answer is flagged as off topic. Advanced AI powered systems can analyze the language and coherence of a response to judge its relevance to the prompt. Filtering out these answers ensures your analysis remains focused and meaningful. Platforms like Yazi take this a step further, using AI to analyze, translate, and standardize open ended answers from over 100 languages, making it easy to spot outliers that don’t make sense.

Why Robust Quality Checks Matter

Ensuring high quality survey data is not just a technical exercise; it’s fundamental to generating insights you can trust. By implementing a full range of panel quality checks, speeding, gibberish, and red flag checks, you can confidently separate meaningful feedback from noise.

The good news is you don’t have to do it all manually. Modern platforms are designed with these safeguards built in. For compliance details, review Yazi’s Data Security Executive Summary. Yazi’s market research platform, for instance, combines the incredible reach of WhatsApp in emerging markets with rigorous, AI driven quality controls, delivering higher response rates and cleaner data. By making these practices a standard part of your research process, you can avoid costly errors and focus on what truly matters: understanding your audience.

Ready to elevate your data quality? Explore how Yazi works or sign up for a demo to see these checks in action on real WhatsApp based surveys.

Frequently Asked Questions

What are the most common red flags in survey data?

The most common red flags include speeding (finishing too fast), straightlining (giving the same answer repeatedly), failing attention checks, providing gibberish or off topic open ended answers, and duplicate entries from the same person.

Why are panel quality checks for speeding and gibberish important?

These checks are critical because they filter out low effort and fraudulent responses. Speeding indicates a lack of thoughtful consideration, while gibberish pollutes qualitative data with meaningless noise. Removing them ensures your conclusions are based on genuine, attentive feedback.

Can you have too many attention checks in a survey?

Yes. While helpful, too many trap questions can annoy or demotivate legitimate respondents, potentially causing them to answer differently or drop out of the survey entirely. It’s best to use them strategically alongside other, less intrusive quality checks.

How do you handle a respondent who fails a quality check?

Typically, a researcher will review the flagged response. Depending on the severity and frequency of the flags for that respondent, their entire survey may be discarded from the final dataset. The goal is to remove low quality data without unfairly penalizing a respondent for a single, minor mistake.

What’s the difference between bot detection and impostor screening?

Bot detection focuses on identifying non human, automated programs trying to complete surveys. Impostor screening focuses on real humans who are misrepresenting their identity or qualifications to gain access to a survey for which they are not eligible. Both are crucial forms of fraud prevention.

%202.png)